At the recent SMX West conference, Google execs made waves when they spoke about making possible exceptions when the overall ranking algorithm affected sites in unexpected ways. A recent report from Search Engine Land details Matt Cutts' explanation for search engine “white lists,” but, ultimately, Cutts says the recent Panda algorithmic update demands high-quality content from all sites without exception.

Cutts explained that the first priority at Google is to do as much as possible algorithmically. Still, he said that there are times when sites may be negatively impacted by an algorithm unjustly – no machine is 100 percent accurate.

Search Engine Land quotes Cutts as saying that in the instance of spam, “the stuff you can’t catch you are willing to take manual action on to remove because that is abuse and it is a bad user experience.” He alluded to the idea that there may be a similar approach to manually protect quality sites that could be punished by an algorithm.

Since then, several online forums have said Google has “favorite” spam content sites that are safe from the most recent algorithmic update. (A notable article from SEO Books went as far as to say, “If you make it past Google's arbitrary line in the sand there is no limit to how much spamming and jamming you can do.”)

However, in Search Engine Land's most recent transcript report, Cutts says, “It is also important to realize that there are many many algorithms, maybe the majority of the algorithms don't have any exception logs. For example, Panda. We don't have [one], there is no way right now to do any manual exceptions.”

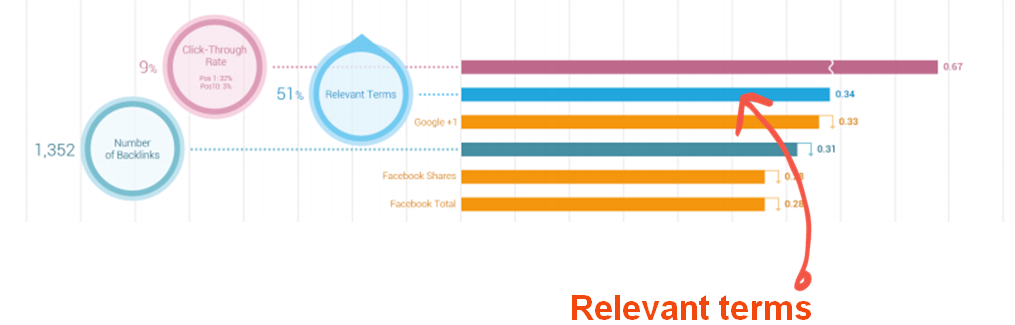

What this means is that the latest algorithmic update demands quality content from all sites equally. Brafton has reported that some larger sites have fallen in rankings where smaller, specialty sites have prevailed because of niche, authoritative content.