Market leadership is the ability to let the evidence override your preferences and ensure that your strategy is dictated by user behavior.

When you run experiments, change an element on your mobile display and measure user engagement, AI is good at analyzing that performance and serving up the metrics. It’s a good starting point as you pave the way to market domination. But, as you know, artificial intelligence has its limitations too.

This article explores why you’d use AI-powered tools in your split testing, and under which circumstances you might want to give it a miss.

What Is A/B Testing?

A/B testing, or split testing, is a method of research that compares two variants of a single touchpoint with separate user segments. Your “touchpoint” could be an email headline, a call-to-action button color or display ad copy. The test results determine which option performs better against a specific goal.

Now, don’t get me wrong, it’s much like regular testing, except you’re running a controlled trial on two variables simultaneously, rather than sequentially. An A/B test keeps your strategy tied to statistical conclusions, rather than an inkling.

There are plenty of ways to A/B test using AI, including:

Subscribe to

The Content Marketer

Get weekly insights, advice and opinions about all things digital marketing.

Thank you for subscribing to The Content Marketer!

Ideation

Generative AI tools, such as Gemini and ChatGPT, can scrape your website to identify gaps, generate hypotheses, draft fresh copy or iterate design variations, basically setting up the test parameters for you.

Data Modeling and Analysis

AI moves propensity models up the rung by predicting how segments will behave in the future. Most marketing tools, like Optimizely Data Platform, GA4 and HubSpot, offer integrated AI models with propensity modeling capabilities that identify the statistical significance of user trends much faster than humans can.

Real-Time Personalization

Standalone optimization platforms like Kameleoon or Dynamic Yield sit on top of your website, using automation to route users based on past behavior and real-time intent.

Together, these AI tools enable segmented testing (e.g., one test for new visitors and another for repeat customers) and dynamic traffic allocation. Meaning, if variation B works better on a Wednesday afternoon, the AI will automatically route traffic to version B without you having to touch a dial.

As with anything else, you get it right and you’re away laughing. But when there’s a glitch in the AI systems, unclean data or poor modeling forming the basis of your tests, you can just as easily scale the Hollywood theatrics, which, euphemisms aside, can be pretty damaging. More on that soon.

Why Marketers Should Be A/B Testing, Like, Everything

In 2026, 93% of marketers report that personalization improves leads or purchases, per HubSpot’s State of Marketing 2026 Report. Think about that for a minute.

To demonstrate the success of personalization, these marketers had to first deliver a personalized experience. To accurately customize that experience, they needed algorithms showing them which song, dress or leather shine to recommend next. To know that, they had to gather a boatload of behavioral datasets and identify their statistical significance. For that, they probably ran a test or two.

At this point, you can start picturing the value of not only A/B testing, but solid AI-driven, human-governed A/B testing. As a result of this methodology, e-commerce businesses have seen a median 2.88% uplift in revenue per visit (RPV), with the top 10% of their sample achieving a 9.5% lift in RPV, as DRIP found. So, that’s keeping your CEO and CFO happy, not to mention long-term engagement and customer retention gains.

So, why A/B testing in particular, then?

- Faster analysis: The goal of an A/B test isn’t only to find a winner, but to reduce the cost of decision-making by shortening the feedback loop between hypothesis and execution.

- Higher accuracy: Data is the name of the game. A/B testing backs your marketing choices with data-driven evidence, showing which variant statistically performs better, worse or has no conclusive impact.

- Risk mitigation: A/B testing allows brands to establish a confidence benchmark that protects their bottom line before scaling an experience.

- Predictive capabilities: Test results build a bank of proprietary first-party data that helps track long-term consumer shifts, which then inform future decisions.

- Ongoing optimization: Continuous experimentation ensures your user experience evolves with user expectations and algorithmic updates.

- Troubleshooting: If marketing campaigns, pages or assets underperform, A/B testing can isolate friction points without rebuilding the entire funnel.

- Ideation: Feed unexpected results and outliers into future campaign design, or use number-backed AI-generated hypotheses to drive testing.

Though the benefits are abounding, remember that data accuracy has a linear relationship with the validity of your results. If the data-gathering process isn’t standardized from the jump, your conclusions aren’t mathematically valid and your workflow will fall apart.

What Can AI Do for Your A/B Testing?

To answer that question, let’s first look at what a successful A/B test looks like when AI is not involved.

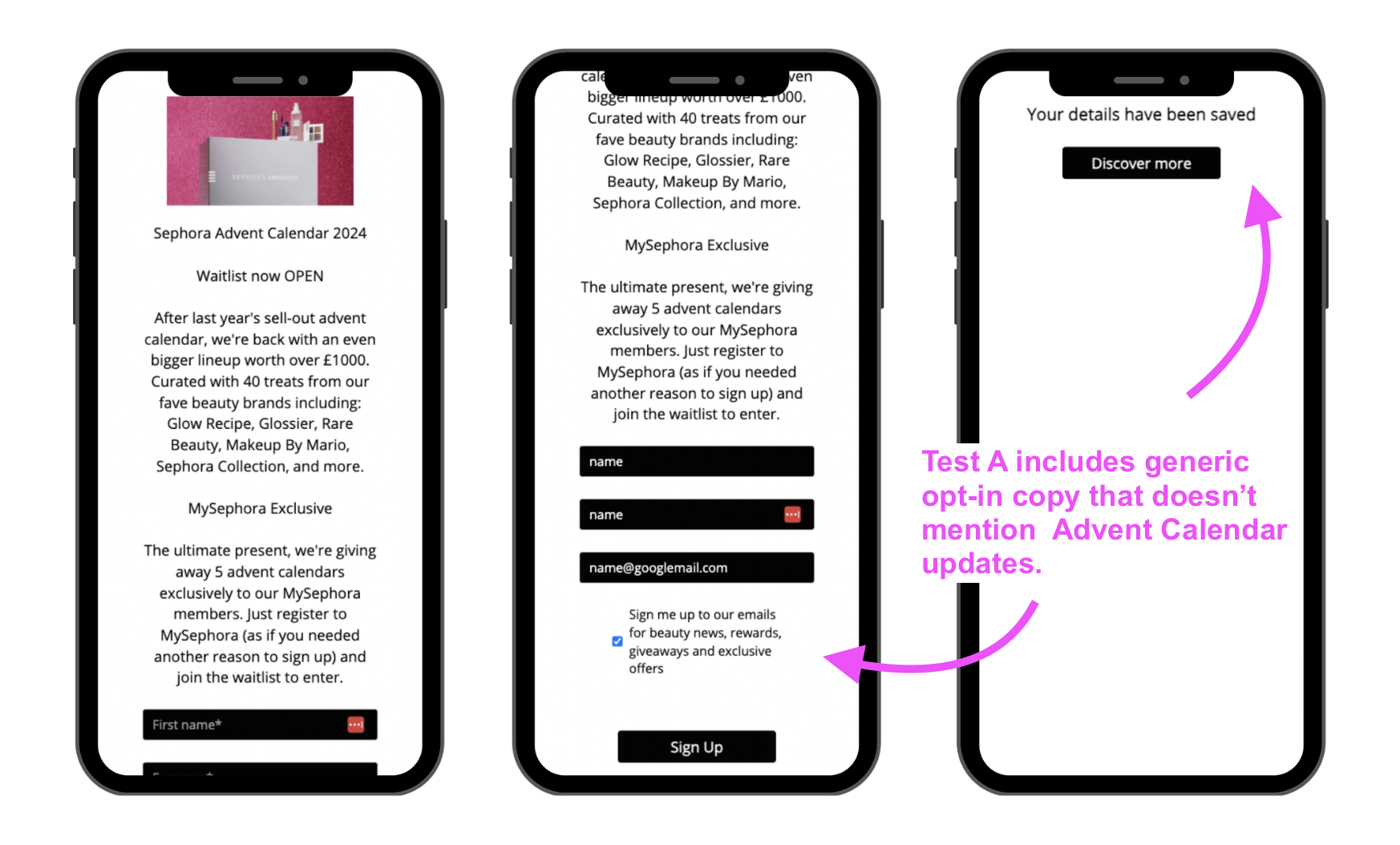

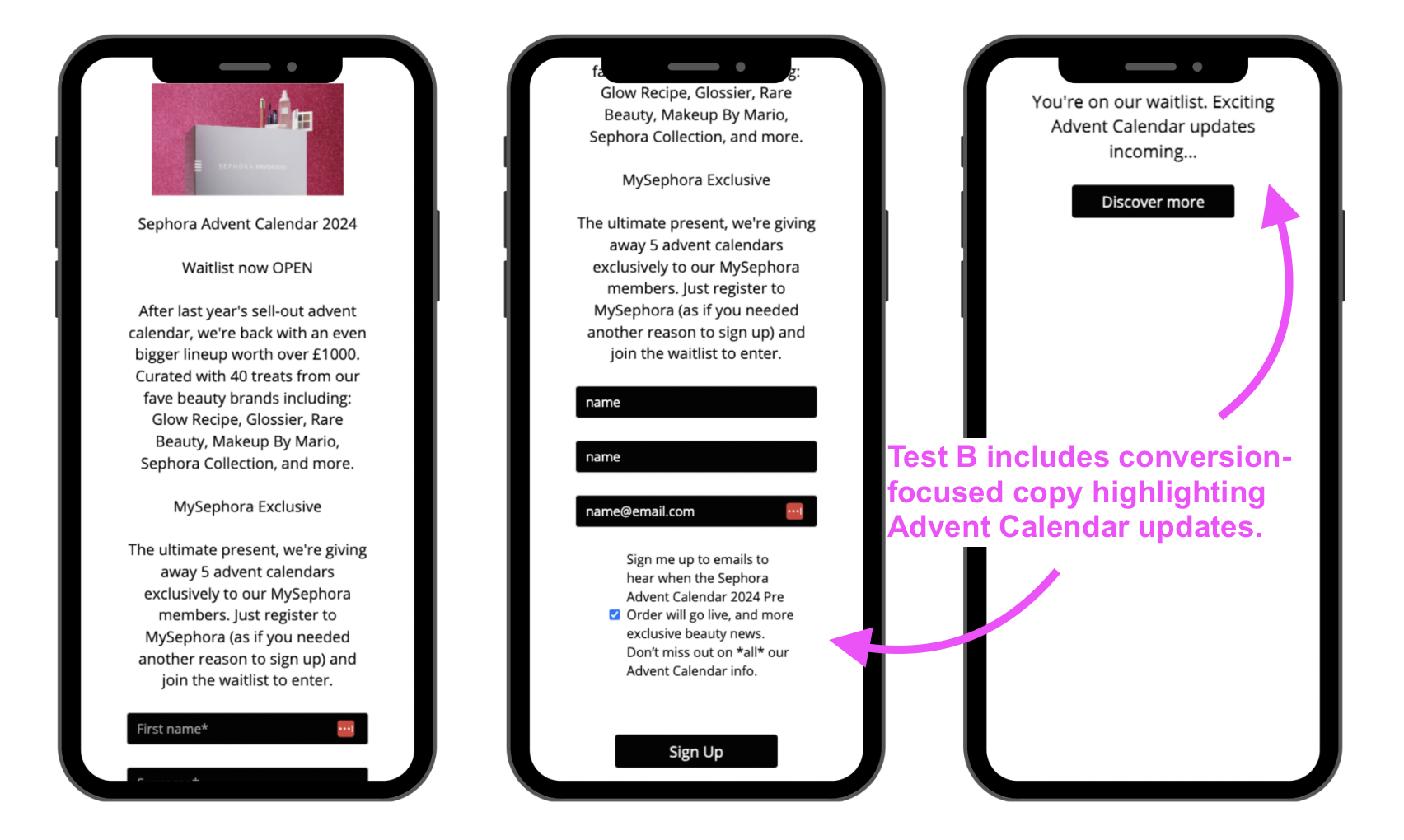

Sephora learned through A/B testing that changing a mere 22 words on its opt-in pages can lead to significant breakthroughs. The brand was using a 24-day gamified digital calendar in its app to drive customer experience. While customers were playing, Sephora A/B tested the sign-up box for their physical 2024 Beauty Calendar to see which wording would better convince people to be notified of its release.

- Variant A: Didn’t mention the Advent Calendar on the opt-in button or the exit page.

- Variant B: Included specific copy about the Advent Calendar in the opt-in button and exit page.

As results would have it, the brand achieved 62% uplift in conversion rates for Variant B. For Sephora, tying the opt-in to an anticipated product was far more effective than a generic request to learn more.

Variant A

Variant B

Now, layer automation and predictive analysis over those results, and consider the real-world possibilities. By synthesizing massive behavioral datasets, AI and machine learning can suggest the variations that are mathematically most likely to succeed. AI-enhanced A/B testing enables hyper-personalization at an individual level, rather than herding your audience by segment. It can even facilitate multivariate tests to simultaneously analyze thousands of variable combinations and show you which ones work best.

You can apply this across multiple use-cases, including:

- Email send times and subject lines.

- Placement, colors and copy for CTAs.

- Scarcity language.

- Pop-up timers.

- Product recommendations.

- Pricing thresholds.

- Content sequencing.

- Landing page copy.

- Mobile layouts.

The layer AI adds to standard A/B testing is that it shortens the time to implementation, gathers and analyzes greater datasets and scales to far superior heights than standard “if, then” rules-based personalization tactics.

If you pull it off. And let’s be honest, how many of us have pulled a 100% performance from LLMs on the first try?

What Are the Limitations of AI for A/B Testing?

The limitations of AI-powered A/B testing are not far removed from those of AI in general. But they have pretty serious implications, running from compliance to ethics to a marketer’s psychological well-being.

Data and Privacy

If you work in health care, finance or any other industry that handles sensitive personal data, you’ll want to tread very carefully about which data you feed to the machine. Leaks happen, and AI itself is making it much easier to hack systems. The irresponsible use of data leads to reputational crises, and that’s something we don’t need to A/B test.

Negative Personalization Experiences

Two-thirds of consumers have had at least one negative experience with personalization, leading them to end the interaction, per Boston Consulting Group. Some personalization attempts succeed because they remove psychological triggers or friction at critical decision points, the aforementioned DRIP notes. Whereas others consistently underperform because they enact changes that conflict with established user expectations.

Technical Complexity

Throw A/B testing across thousands of individual consumer touchpoints together, and you’ve got a fair chunk to bite off, from a data integration and strategy standpoint. One place you don’t want to go is black-box territory, where nobody really knows what’s going on. Simply put, you still need experts who can intellectually and practically grasp what is happening.

AI can automate the functions, but that doesn’t make it any less complex — especially when you take automation bias into account.

Automation Bias

Automation bias is the tendency to favor algorithmic suggestions over contradictory human observations. You’re essentially using artificial intelligence as a heuristic replacement for critical thought. The tools excel at correlation and also lack contextual awareness, meaning they fail to comprehensively account for current affairs or brand values that may render some conclusions moot.

Scaling Experiments Without Losing the Humanity

AI-powered A/B testing can take your personalization and conversion rates to town, but results may vary. With hyperpersonalization prominent and growing, it’s a good idea to start thinking about what you can test and how you can integrate AI into the process to streamline your results.

However, artificial intelligence is not a replacement for the humans running the tests, and the ethical implications of a mishap can be harrowing. So, as you get started, limit your AI-driven A/B testing to the extent of your data team’s technical capabilities and discernment, and only scale your tests alongside your humans’ ability to manage them.

Note: This article was originally published on contentmarketing.ai.