SEO is ever progressing; the strategies that were applied just last year won’t necessarily play the same role today in your attempt to place your content as a top search result. Let us bring you up-to-date.

Here, we’re going to unpack technical SEO: its definition, types, common technical SEO errors, and importantly, an essential technical SEO checklist to ensure that your content is optimally set up and ultimately yields SEO success. Let’s jump in!

What is Technical SEO?

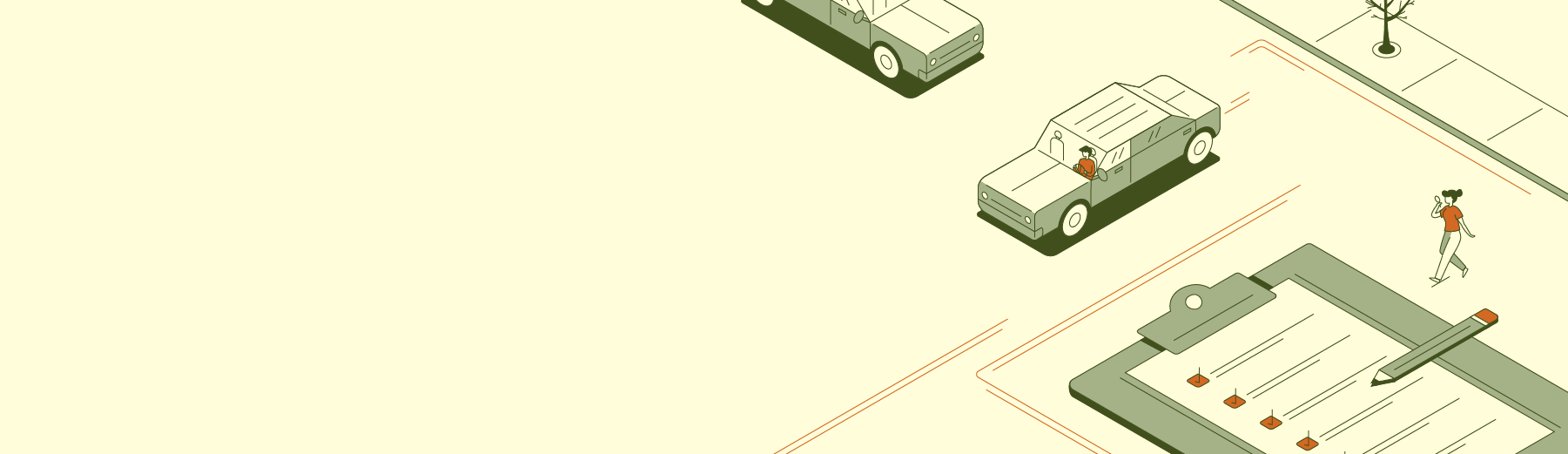

Your website is like a car. Some features are supposed to be seen and interacted with: your webpage copy, images, graphics, videos, form fills, navigation, etc. These are akin to a vehicle’s paint job, body, dashboard and interior seating.

Then there are the unseen elements of your site — your robot.txt file, your CSS, HTML and JavaScript files, your backend configurations, etc. Like the gears, tubes, hoses, gaskets and other components under the hood of a vehicle, they aren’t pretty or intuitive. But they are central to the performance of your website.

Technical search engine optimization (SEO) is concerned with all the non-intuitive stuff that influences search engines’ ability to crawl your site, understand its content and, ultimately, index your pages in search results.

Without technical SEO, you can’t optimize your ranking. You’d just be spinning your wheels.

Where Technical SEO Fits Into Your Digital Marketing Strategy

There are two main types of SEO:

1. On-Page SEO: Activities on your site to help it rank better in search engine results pages (SERPs):

- Content optimization: creating blog posts and landing pages that help you rank for specific keywords people are typing into search engines.

- Technical SEO: optimizing the structural, backend elements of your site that influence a search engine’s read of your webpages.

2. Off-Page SEO: Activities you do off your site to help it rank better.

- Link building: earning links from other blogs and websites back to your pages.

- Social media and email marketing: promoting your on-page content through off-page channels like Facebook and email.

You need both to rank well on search engines.

That said, you can’t rank at all if your site isn’t indexable in SERPs — and even if it is, a quagmire of technical issues can foil your frontend SEO efforts and send your site plummeting to page 2. The same is true if your site has crawl errors.

In this sense, technical SEO is the engine behind all of your digital marketing campaigns. And like a real engine, a lot goes into building and optimizing it and maintaining its performance.

Checklist breakdown

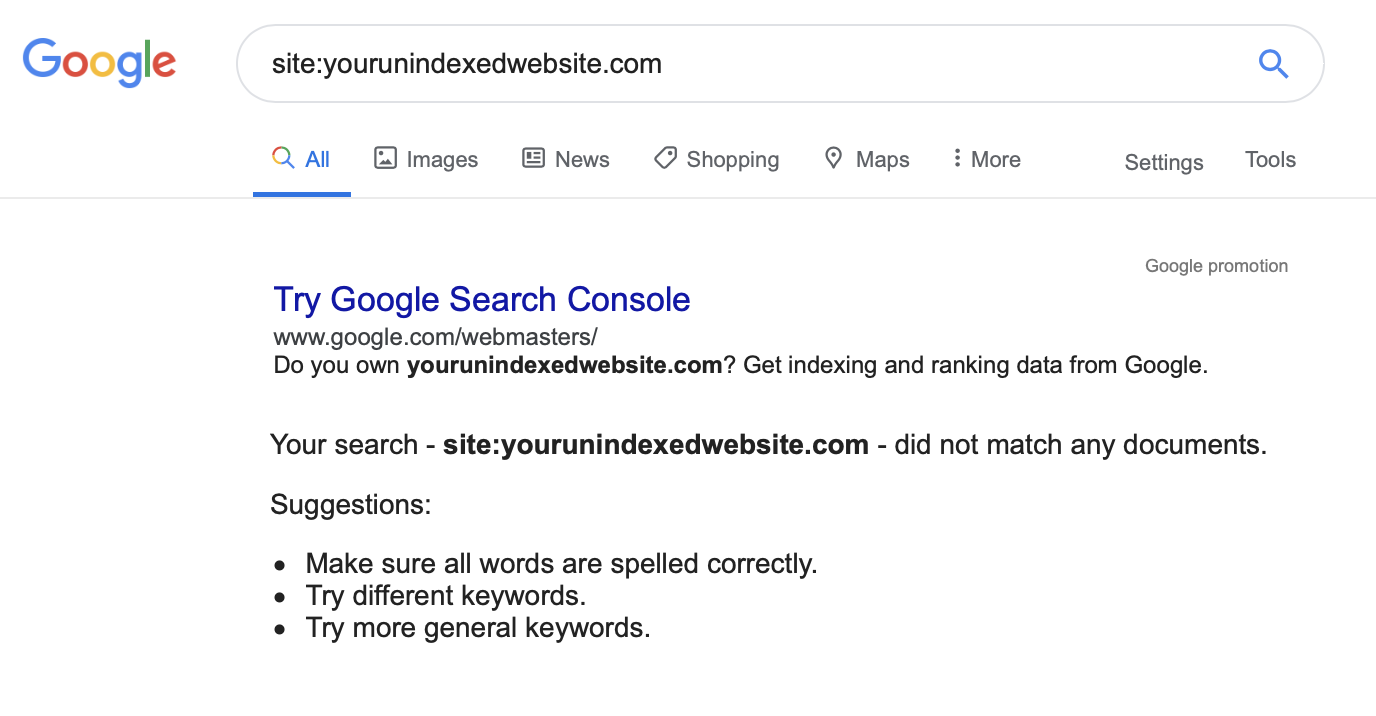

Below are 11 steps that will help guide you in the right direction toward a website that is technically sound — ensuring an optimal performance in search engines.

Item No. 1: Indexing

Indexing is how you tell Google which of your webpages you want to appear in search results, and which you want to exclude.

Some pages — like your product pages — are useful to a searcher. Others — like an order-processing page — are not. You only want Google to rank the useful ones. Otherwise, the pages that aren’t useful to someone searching the web will be factored into how search engines rank your site. Other pages you might not want to index include:

- Any duplicate pages on your site or between multiple web properties you might own.

- Internal search results pages.

- Thank you pages.

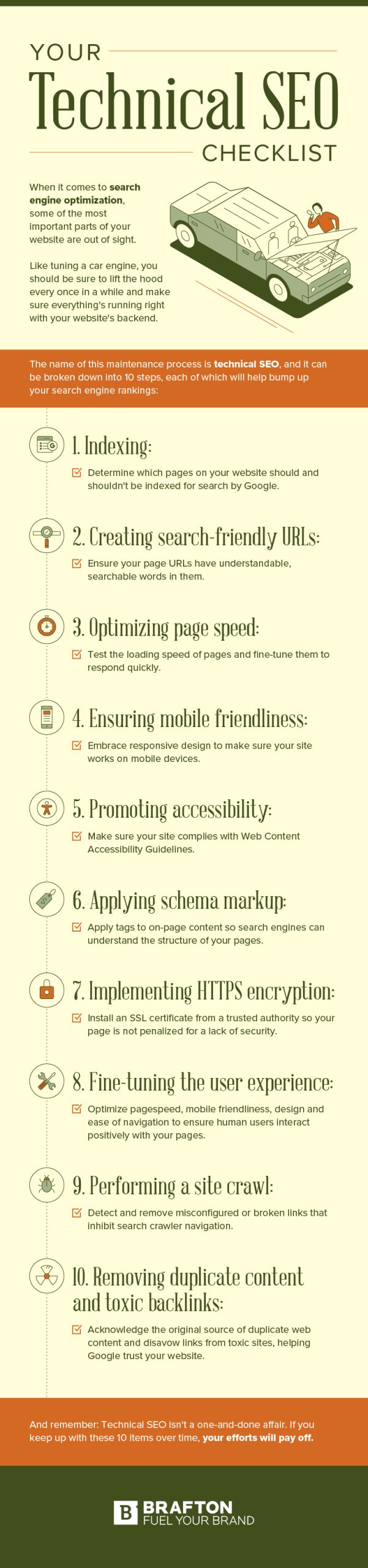

Unless your site is brand new, it is probably already indexed. The easiest way to tell is to perform a simple Google search of your domain. If it appears in SERPs, you know your site is indexed:

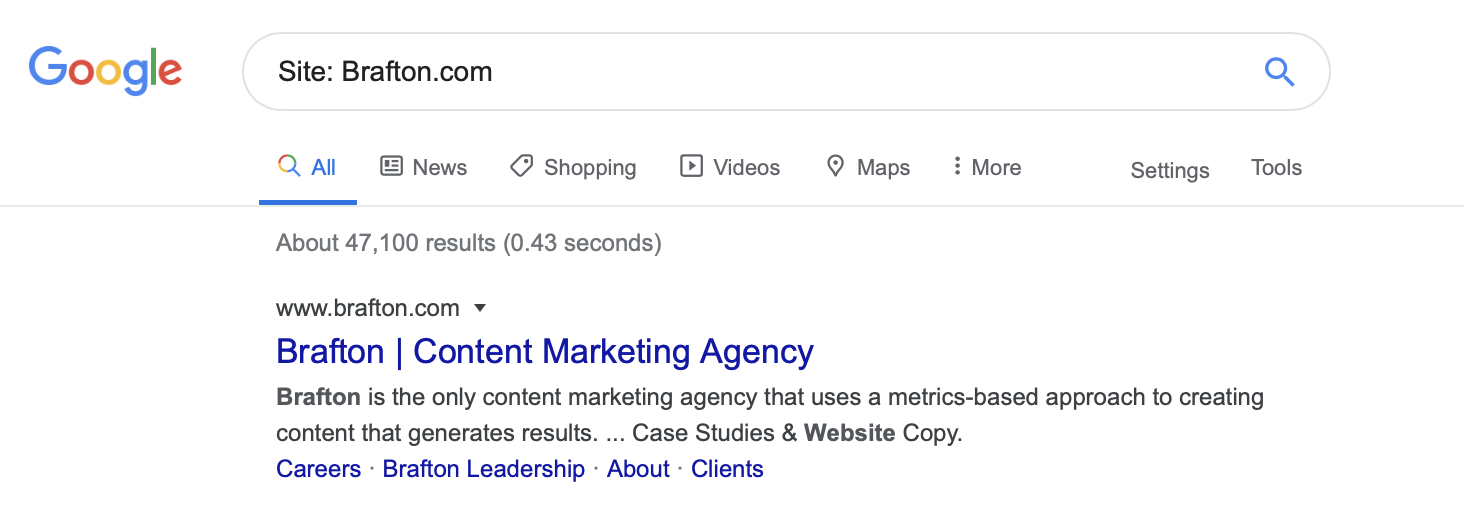

If it doesn’t appear in the Google SERP, you’ll receive the prompt, “Try Google Search Console.”

With that in mind, here are the checklist items associated with site indexing:

Create a Google Search Console account: Search Console (formerly Google Webmaster Tools) lets you see if and when your site was last indexed, any existing indexing errors, etc. The Bing equivalent of Search Console is Bing Webmaster Tools.

Index your site: Assuming your webpages aren’t instructing Google otherwise, the search engine will eventually index them on its own.

Create an XML sitemap and upload it to Search Console: An XML sitemap is a list of every important URL on your website. It helps Google find all of the critical pages on your site. It also tells Google when each page was last updated and its priority relative to other pages on the site. An XML sitemap helps Google index your site more quickly, and can ensure that the site is recrawled and reindexed regularly.

Use the <meta name=”robots” content=”noindex”> tag for pages you do not want indexed: You can automatically add this tag (no coding necessary) to pages as you post them via the Yoast SEO WordPress plugin. For pages that have already been indexed, you will have to submit a de-index request via Search Console.

Use the rel=”nofollow” tag: De-indexing a page will prevent it from showing up in search results, but it won’t necessarily hide the page from search crawlers. Googlebot can still discover a page if you have linked to that de-indexed page somewhere else on your website, or if someone links to it on theirs. The only way to be 100% certain that Google’s crawlers will not follow those links is to add the rel=”nofollow” tag. This will tell Google not to crawl this URL. Using the Yoast plugin is the easiest way to do this.

Submit a robot.txt file to Google Search Console: This text file tells crawlers which pages and files they can request from your site, and how to crawl them. It must be named “robot.txt” — all lowercase — and it must be placed on the website’s top-level directory. Note that crawling is not the same as indexing. Robot.txt won’t necessarily stop your pages from being indexed.

Tools you’ll need: Google Search Console, WordPress, Yoast SEO plugin (or MonsterInsights plugin), an XML sitemap generator.

Item No. 2: Making Search-Friendly URLs

Your website URLs should be easy to understand for search engines and human users alike. Long, inscrutable strings of scrambled letters and numbers convey no information about a page. Keep your URLs simple and understandable with these tips:

- Shorter is generally better.

- Use plain-text, easy to understand terms. Here is an example: “https://www.brafton.com/blog/email-marketing/email-marketing-blogs/”

- Use hyphens to separate words.

- Use all lowercase.

- Limit the use of underscores or special characters (hashes can send users to a specific section of a page, but use this sparingly).

- Easily adjust your URLs in WordPress; make sure you include the main keyword you’re trying to rank for within the URL.

Tools you’ll need: A CMS (e.g. WordPress), a keyword research tool (e.g.) Semrush, common sense.

Subscribe to

The Content Marketer

Get weekly insights, advice and opinions about all things digital marketing.

Thank you for subscribing to The Content Marketer!

Item No. 3: Page Speed

How quickly your web pages load is a direct ranking factor. It’s also an indirect ranking factor — slow loading times increase bounce rate (number of users who leave after visiting one page). If you use a VPS for WordPress, you will have a hosting environment that is better suited to meet your website’s needs, so you’ll automatically increase your chances of displaying the performance you’re after.

That’s why it’s important to, a) test your site speed using the Google PageSpeed Insights tool and, b) take steps to optimize page speed to avoid ranking penalties and high bounce rates.

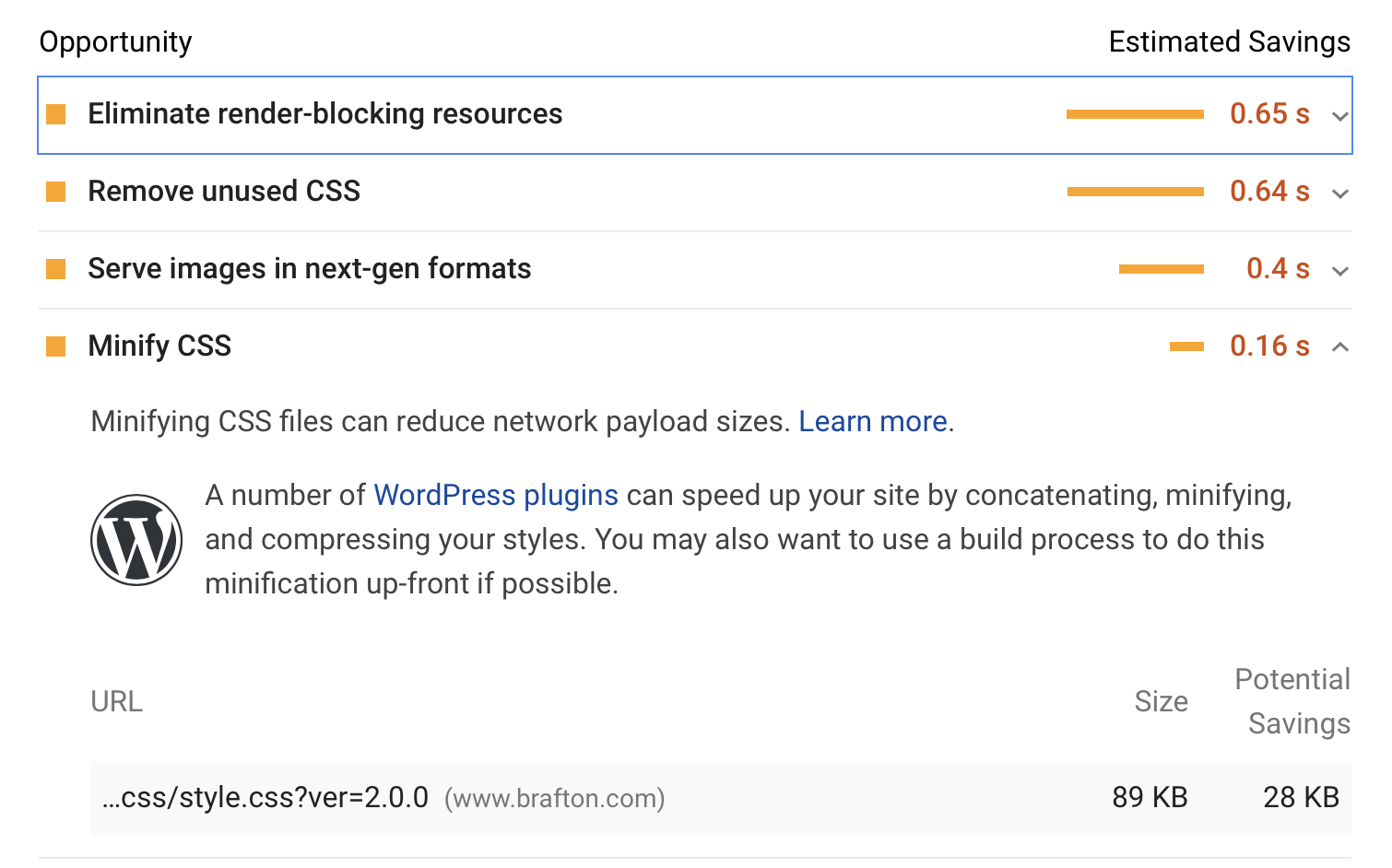

Note that PageSpeed provides actionable steps to increase page speed and even links out resources such as WordPress plugins that can help you, like so:

In this sense, the best checklist for page speed is whatever PageSpeed Insights tells you to do.

However, nearly any website publisher can benefit from taking certain key steps.

(Warning: This is quite possibly the most technical section of this entire post.)

- Optimize images: There are a three things to keep in mind here:

- Use PNG for simple graphics with 16 or fewer images and JPEG for photos and advanced graphics.

- Start using the SRCSET attribute in your HTML code. This lets you have multiple versions of images for different uses.

- Use CSS sprites for images that you use frequently like logos and buttons. This essentially combines multiple images into a single image file so there are fewer HTTP requests.

- Use browser caching: Browsers can cache information such as images and style sheets to improve loading time for users. Use a tool such as YSlow to create cache expiration policies.

- Reduce redirects: Every time a link on one of your pages redirects to another page, you lose a little bit of loading time, so try to minimize the number of redirects on your site.

- Bundle files: Bundling places coding language files into one big file (e.g., all the JavaScript files in one file, all the CSS files in another and so on).

- Prioritize above-the-fold content: Structure your HTML code to run content that appears above the fold (visible without scrolling) first. Technically, this does not increase page speed; however, it gives the user something to see and interact with while the rest of the page loads, which improves perceived page performance and therefore helps reduce bounce rates.

- Minify CSS, HTML and JavaScript files: Minification removes all unnecessary characters like spacing and developer comments from your code. Tools like UglifyJS2 (for JavaScript), cssnano (for CSS) and HTMLMinifier (for HTML) can help you optimize code so your pages load faster.

- Use Async: Work with your developers to set scripts to “Async” when they aren’t needed to render content above the fold. This ensures the browser won’t pause site assembly when it needs to fetch a script necessary to display the rest of your webpage. Use developer time-tracking techniques and time tracking software to complete tasks more effectively.

Tools you’ll need: Your web developers, Lighthouse, Google PageSpeed Insights, GTmetrix, YSlow, UglifyJS2, cssnano, HTMLMinifier.

Item No. 4: Mobile Friendliness

Google prioritizes the mobile version of your site for ranking and indexing. This means its bots look at the mobile version before the desktop version.

For the most part, mobile SEO is the same as desktop SEO — but with a few crucial exceptions. Namely, your web pages will display differently on mobile than on desktop. It’s your job to ensure they load well across different devices and that they don’t disrupt the user experience.

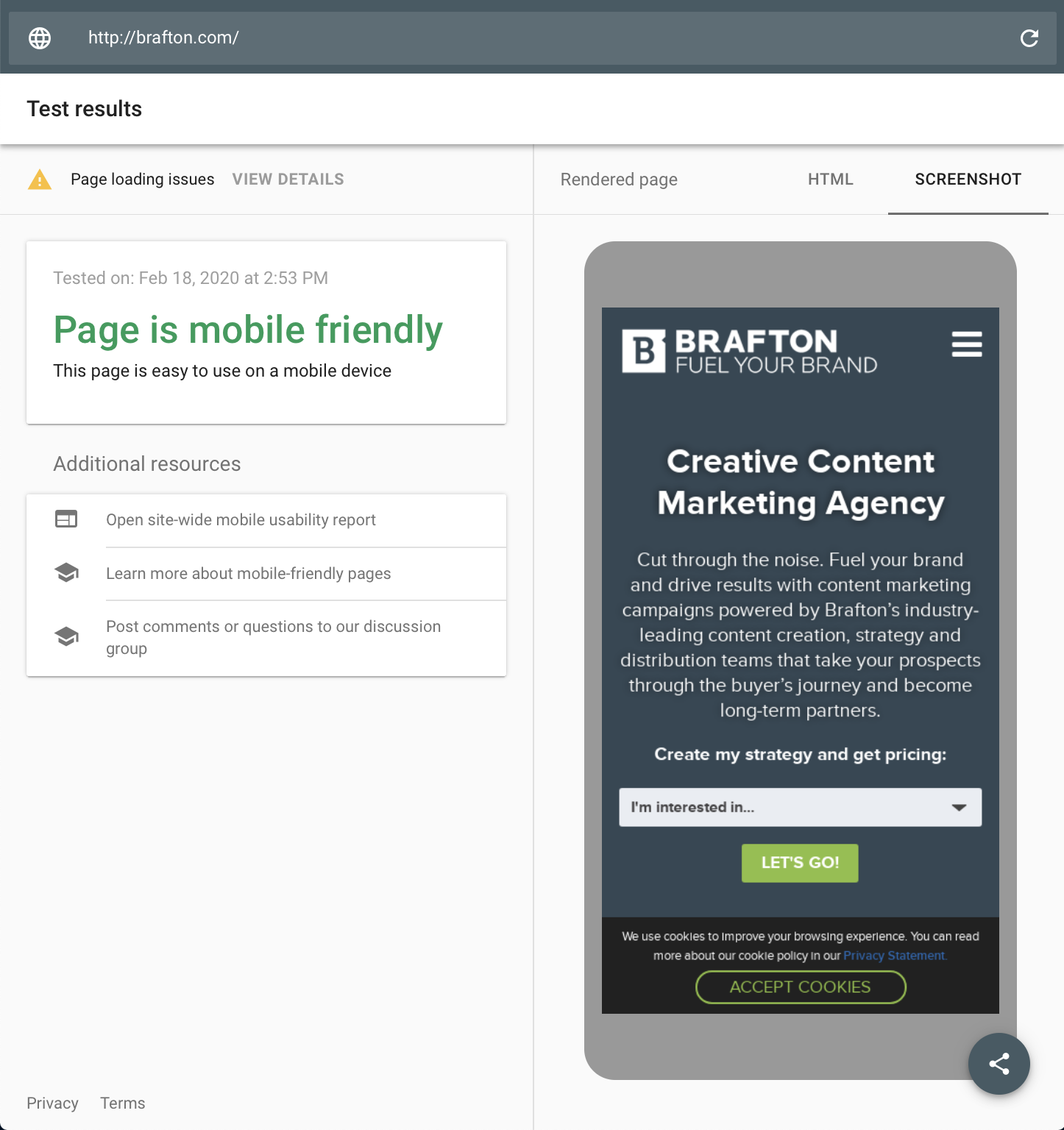

The easiest way to find out if your website is mobile friendly is with the Google Mobile-Friendly Test. Just copy your site’s URL into the tool and it will tell you whether your site is optimized for mobile.

Some of the main factors to consider when making your site mobile friendly include the following:

- Use responsive design: With responsive design, your website code automatically responds to the screen you’re viewing it on. You can either use a responsive template (such as a WordPress theme), or you can manually make each page responsive.

- Use Viewport Meta Tag: Copy <meta name=”viewport” content=”width=device-width, initial-scale=1″> in the code for each of your webpages. This technique is especially useful for publishers who need to manually make their site mobile friendly. However, your code may require some fine-tuning (e.g. CSS media queries to set relative width values for certain on-page elements).

- Remove Flash from your site: It’s no longer supported by default on most major mobile devices.

- Focus on page speed: Page speed is even more important on mobile than on desktop, so it’s that much more critical that you do everything we discussed earlier in this post.

- Use Accelerated Mobile Pages (AMP) only as a last resort: AMP is an open-source project that isn’t for everyone. It creates a compressed, stripped-down version of a page to improve its speed and performance. However, this means it eliminates some of your site’s bells and whistles, and should therefore be used sparingly (if at all).

Tools you’ll need: Google’s Mobile-Friendly Test, Lighthouse, PageSpeed Insights, Mobile Checker by W3C, BrowserStack.

Item No. 5: Accessibility

Some website publishers have faced lawsuits for failing to comply with the Americans With Disabilities Act. Perhaps the most high-profile example involved a suit against Beyonce’s Parkwood Entertainment website, which was not making accommodations for visually impaired users.

While the ADA does not provide explicit guidance for website compliance, adhering to the Web Content Accessibility Guidelines (WCAG) should help you cover your bases. Make sure you’re checking the following boxes on your site:

- All content is navigable via keyboard.

- All images have alt text.

- Content should not blink more than 3 times per second.

- Use semantic markup (<H1>, <H2>, <p>, etc.).

- Use Aria labels (this markup helps screen readers and other assistive technologies better understand the purpose of certain navigation features).

- Use sufficient color contrast.

- Use text alternatives for graphs represented as images.

- Provide captioning and/or descriptive text.

Tools you’ll need: WCAG guidelines, AChecker, WAVE, CKEditor 4 Accessibility Checker.

Item No. 6: Schema Markup

There are two types of website markup that web publishers can use to structure data so it is more easily understood by search engines:

- Semantic: Helps search engines understand structure of the site better (e.g., title tag, headers, etc.).

- Schema: Helps search engines better contextualize subject matter (e.g., the difference between “Avatar” the movie versus an avatar in an application).

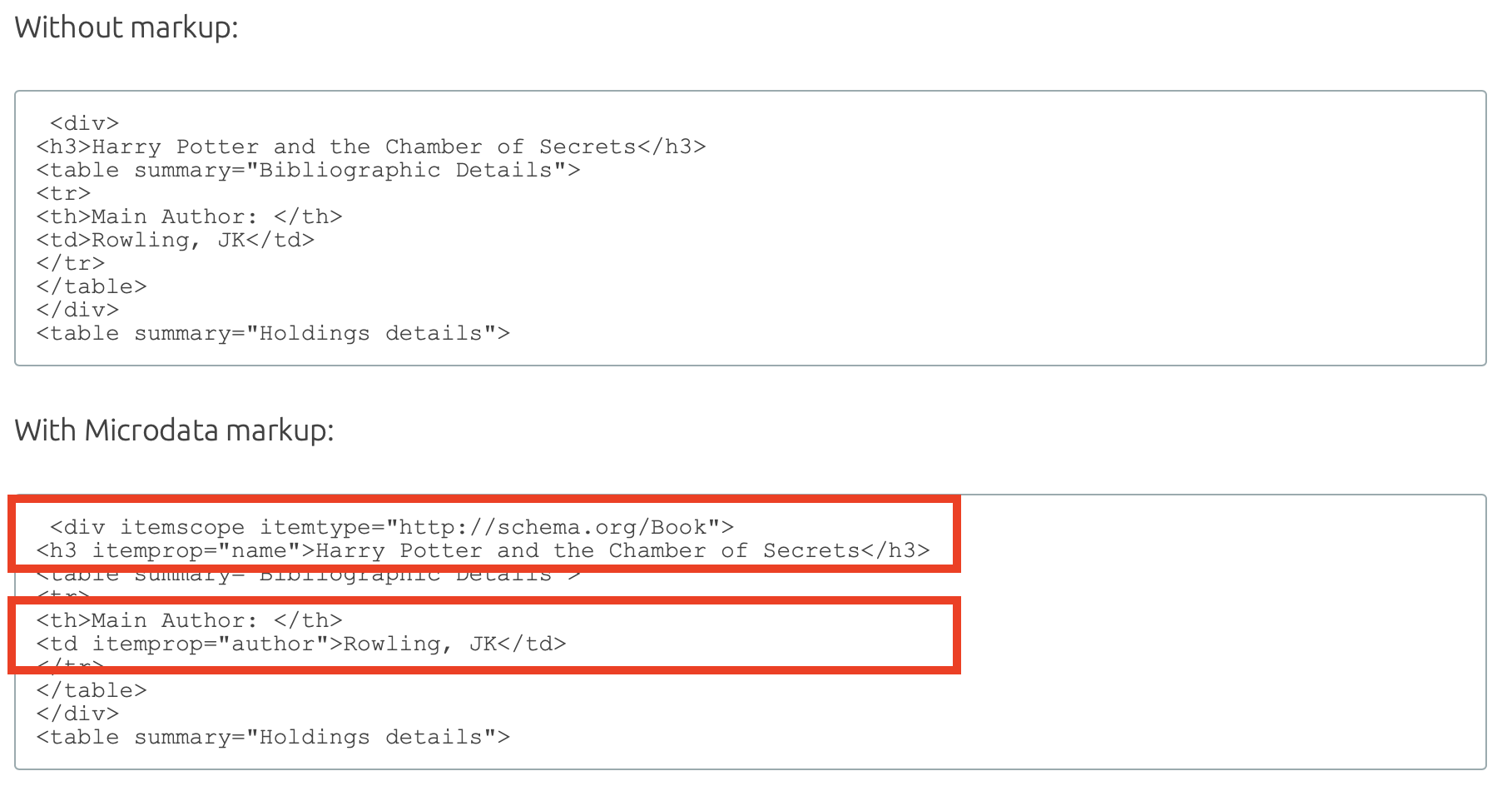

The simplest way to think of schema markup is as tags that tell Google what a thing is. For instance, “Harry Potter and the Chamber of Secrets” is a “book.” You can indicate as much with schema (Microdata in the example below):

As for the checklist, the only real step here is to use one of the following schema markup languages to help better structure your code for Google:

- JSON-LD.

- Microdata.

- RDFa.

Tools you’ll need: A resource that simplifies schema markup, namely Google Data Highlighter (if you have access to Search Console); Google Structured Data Markup Helper, MicrodataGenerator.com or Schema Markup Generator by Merkle.

Item No. 7: HTTPS Encryption

If you don’t use HTTPS encryption, browsers will label your pages as not secure, search engines will penalize you and users will lose trust in you.

HTTPS stands for Hypertext Transfer Protocol Secure. It indicates to a user that their browser’s connection to a website is secured with encryption. In other words, it prevents an interloper from tampering with the connection between a user’s browser and your website.

Keep in mind that HTTPS is a direct ranking signal. Google, Bing and other search engines obviously don’t want to send users to potentially vulnerable websites.

Tools you’ll need: An SSL certificate from a certificate authority (Symantec, Comodo, IdentTrust, GlobalSign, DigiCert), a tool to help you install that plugin (Real Simple SSL).

Item No. 8: User Experience

User experience (UX) is not a direct ranking factor. However, it can negatively affect your ranking in other ways. Slow loading time, poor website navigation, excessively long form fills, unsightly or amateurish graphic design, mobile unfriendliness and other on-page UX elements can influence how a user engages with your site. That, in turn, can impact your ranking.

Make sure you’re doing the following to optimize UX:

- Look at bounce rate, session time, CTR, conversions and other Google Analytics data to try to diagnose potential UX issues.

- Optimize your site’s page speed (see the page speed section above).

- Make sure your pages are optimized for mobile (see the mobile optimization section above).

- Use breadcrumbs (especially for bigger sites).

- Create an HTML Sitemap that is visible to users.

- Perform a website audit using tools such as HotJar (for user heat mapping).

- Use strategically placed calls-to-actions and conversion tools to drive positive engagements with your brand.

Tools you’ll need: Google PageSpeed Insights, Google’s Mobile-Friendly Test, Google Analytics, HotJar, call-to-action tools (e.g., OptinMonster).

Item No. 9 Crawl Optimization

When Googlebot or another search engine crawler fetches your webpages, it may run into various types of site or URL errors.

A site error such as a DNS error, 500 error or timeout indicates that a search bot is unable to access your site at all – and that is very bad.

If bots can’t crawl your site, the search engine will either lower the ranking or drop your site from the index altogether. Site errors require immediate attention, but are relatively easy to spot. If bots can’t connect to your web servers, then chances are you can’t either.

A URL error, on the other hand, is much easier to miss. Your site may have hundreds if not thousands of internal and external links. Over time, some of those links may change, break or move, thereby preventing Google from being able to crawl them. This will not result in your site being deindexed, but it can hurt your ranking.

It’s incredibly important to scan your site for broken or misconfigured links that inhibit proper crawling. The best tool for the job is Screaming Frog. Use it to identify all of the following, and more:

- 404 errors: Indicates that a page could not be located by a web browser.

- Soft 404 errors: This happens when a server responds to a browser to say that a connection request has succeeded, but the search engine thinks that the page should return a 404 error. Pages with no – or very thin – content, may cause this error.

- 403 forbidden errors: Indicates that a certain page is absolutely forbidden to anyone without the proper credentials. Try to avoid linking to pages that are forbidden to users on your website.

- 301 vs 302 redirects: A 301 redirect is a permanent redirect of one page to another page. You would use it if you were permanently moving a page. A 302 redirect is temporary. It is valuable if you’re performing maintenance on a page and want to temporarily direct the user to a different page.

Make a note to avoid mixing them up. A 301 redirect will pass most SEO value (link equity) from the original page to the new page. A 302 redirect, on the other hand, retains the SEO value on the original page since it is only meant to be temporary. Screaming Frog can show you all of your 301 and 302 redirects, but it is on you to check that you have used them correctly. Otherwise, you could be severely undercutting your SEO efforts for the redirected page.

Tools you’ll need: Screaming Frog SEO Spider, Broken Link Checker, Yoast SEO plugin.

Item No. 10: Duplicate Content and Toxic Backlinks

Duplicate content is content that appears in more than one place on the web (meaning the content is the same but it’s appearing under two unique URLs). Search engines get confused by duplicate content. They don’t know which version to index or rank in queries.

Toxic backlinks are any links back to your site from untrustworthy or spammy sources.

Both of these issues are relatively easy to detect and fix:

- For duplicate content:

- Identify duplicate content on your site using a tool like Siteliner or RavenTools.

- Use the rel=canonical in the duplicate page to point to the original version. This is a soft redirect. It does not redirect the user; it just tells Google which version is more important for indexing purposes. The Yoast SEO WordPress plugin makes this easy.

- Also use the rel=canonical for any content you syndicate – meaning, any content you post on your site and on another blog or industry source.

- For toxic backlinks:

- Perform a link audit using Semrush Backlink Audit.

- Disavow the back link through Google Search Console.

Tools you’ll need: Siteliner or RavenTools, Yoast SEO plugin (for duplicate content); Semrush, Google Search Console (for toxic backlinks).

Item No. 11: Fix Broken Links (Internal and Outbound)

Broken links — both internal and outbound — present a host of negative implications to your site’s performance. Aside from impeding on user experience and disrupting navigation, broken links can also hinder the crawling process and, in turn, affect your site’s indexation.

For broken internal links, consider utilizing a tool like Screaming Frog or Google Search Console to firstly help identify the broken links. Further still, you may opt to manually look for 404 errors and change links accordingly.

Similarly for broken outbound links, you can manually check if the external websites that you’re linking to are still active, or lean on a tool like Semrush to scan for broken links. For both internal and outbound links, it’s important to ensure that you’re periodically auditing your site and keeping an eye on related user feedback flagging any potential broken links.

The tools you’ll need: Screaming Frog SEO Spider, Google Search Console, Semrush.

A Round Up

Your website’s technical components need to be set up properly for your pages to run well and continue to be accessible to Googlebot and other search engine crawlers.

But technical SEO also requires ongoing maintenance.

As you add new content to your site, you need to be diligent about optimizing it on the backend with schema and other technical SEO best practices. Make sure you service your site from time to time – e.g., check for toxic links, URL errors, issues affecting your page speed.

Also, be mindful of search engine algorithm changes and how they may affect the way bots crawl your content.

It’s a lot to stay on top of, but the effort is worth it. Tend to the technical side of things, and your site will return the favor by driving leads deeper into your sales funnel.

Editor’s Note: Updated November 2023.